|

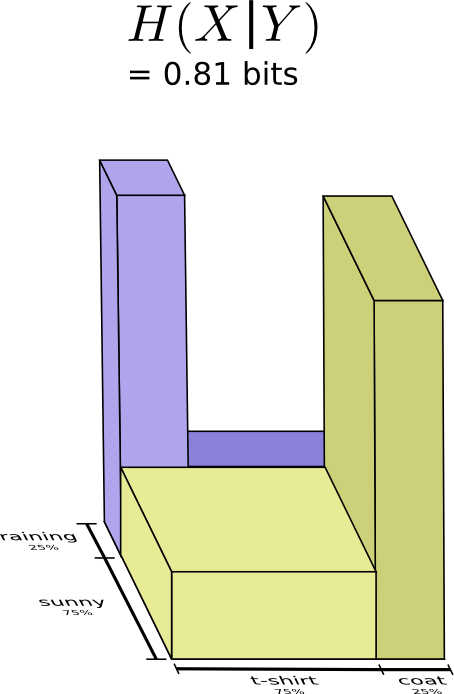

9/10/2023 0 Comments Conditional entropy Setup='''labels= from _main_ import entrop圓''',ĭ = timeit. As a result, I defined my conditional differential entropy as. Reference (2) discusses similar problems. h ( X Y) f ( x, y) log ( f ( x y)) d x d y. Setup='''labels= from _main_ import entropy2''',Ĭ = timeit.repeat(stmt='''entrop圓(labels)''', In (1) the conditional differential entropy is defined as. Let P(Y ×X)with():(A (A))0.Thenthefunction H (Y)denedonthesetofRadonprobabilitymeasuresonY ×X,isupper semi-continuousat. Setup='''labels= from _main_ import entropy1''',ī = timeit.repeat(stmt='''entropy2(labels)''', TOPOLOGICAL CONDITIONAL ENTROPY 145 Lemma 3.2. Timeit operations: repeat_number = 1000000Ī = timeit.repeat(stmt='''entropy1(labels)''', Return -(norm_counts * np.log(norm_counts)/np.log(base)).sum() This online calculator calculates entropy of Y random variable conditioned on specific value of X random variable and X random variable conditioned on specific value of Y random variable given a joint distribution table (X, Y) p. The notion of conditional entropy allows us to formulate how much information the random variable A still contains after Bob has observed B: Definition 7. The second measure is conditional substructure entropy for an arbitrary substructure of fixed size, it describes the number of bits needed to describe the. Return -(vc * np.log(vc)/np.log(base)).sum() As mentioned before, Entropy is a measure of randomness in a probability distribution. Vc = pd.Series(labels).value_counts(normalize=True, sort=False)

I have been reading a bit about conditional entropy, joint entropy, etc but I found this: H(XY, Z) H ( X Y, Z) which seems to imply the entropy associated to X X given Y Y and Z Z (although I'm not sure how to describe it). """ Computes entropy of label distribution. Calculating conditional entropy given two random variables. Value,counts = np.unique(labels, return_counts=True) This question is specifically asking about the "Fastest" way but I only see times on one answer so I'll post a comparison of using scipy and numpy to the original poster's entropy2 answer with slight alterations.įour different approaches: (1) scipy/numpy, (2) numpy/math, (3) pandas/numpy, (4) numpy import numpy as np De nition 8.2 (Conditional entropy) The conditional entropy of a random variable is the entropy of one random variable conditioned on knowledge of another random variable, on average.

Gupta answer is good but could be condensed. There are a few ways to measure entropy for multiple variables we’ll use two, Xand Y.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed